How a Local AI Review Skill Is Reducing PR Round-Trips at Grindr

There was a ritual that preceded every PR submission.

Write the code. Run the tests. Review the diff one final time. Convince yourself it holds up. Submit. Then wait (sometimes for hours) only to receive a comment like "missed a null check" or "this breaks our MVI pattern" in return.

The feedback was never wrong. It was simply late. And every round-trip through review compounds the friction: context switching for the reviewer, rebasing, re-requesting, the entire cycle repeating.

Then we introduced the grindr-code-review skill.

What It Actually Is

Before pushing and opening a PR, you can trigger an AI-powered code review locally inside Claude Code (or Firebender). It reads your diff, loads the relevant architecture documentation, and returns structured feedback; covering the same categories a senior engineer would address in a thorough review.

This is not a linter. It is not a formatting checker. It understands our MVI patterns, our Kotest standards, Compose recomposition issues, and potential memory leaks. It cross-references our actual internal documentation when forming its analysis.

The feedback is structured exactly as a human review would be: critical issues, warnings, minor suggestions, and a section acknowledging what was done well.

The first notable moment was when it identified a missing LaunchedEffect key that would have produced a recomposition loop. That is precisely the kind of issue that is easy to overlook during self-review and non-trivial to spot in a diff. A reviewer would have caught it eventually; but now it surfaces before the PR is ever opened.

How to Use It

The skill responds to natural language; simply typing "review my changes", "code review", or "review this code" in the chat is sufficient for it to infer the intent. That is the primary invocation path for most engineers.

You can also invoke it explicitly with arguments when you need to target a specific scenario. There are four modes, each addressing a common point in the development workflow:

Default — review staged changes:

/grindr-code-review

This is the standard invocation. Stage your changes, run the skill, review the output.

WIP review — review unstaged changes:

/grindr-code-review --unstaged

Useful for mid-development sanity checks. No staging required.

Full PR prep — review the entire branch against master:

/grindr-code-review --branch feature/your-branch-name

Run this immediately before opening the PR. It evaluates the complete branch diff, not just the current working state.

Focused review — review specific files:

/grindr-code-review --files app/src/main/kotlin/ProfileViewModel.kt

When a particular file contains the complexity you want examined, this directs the review accordingly.

What It Checks

The review covers five categories:

Code Quality: Kotlin conventions, null safety, naming, and anything likely to introduce ambiguity for the next engineer reading the code.

Performance: Memory leaks, Compose recomposition triggers, and patterns that could silently degrade runtime behavior.

Architecture: MVI patterns and Clean Architecture layer boundaries. It recognizes that the domain layer has no business importing Android classes and will flag violations accordingly.

Testing: Kotest standards, BaseSpec usage (BaseSpec is our internal base class that wraps Kotest's BehaviorSpec — always extend it instead of BehaviorSpec directly), and Turbine for Flow testing. It identifies when tests use runBlocking in contexts where it is inappropriate.

Bugs: Logic errors, unhandled edge cases, and the class of issue that does not surface in tests but manifests in production.

What the Output Looks Like

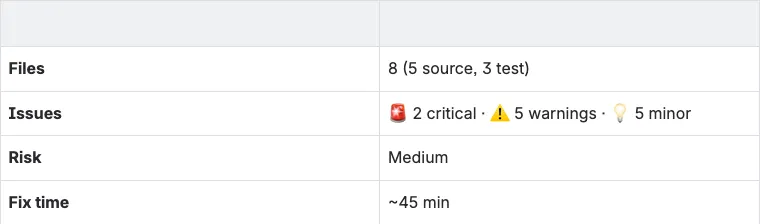

The review opens with a summary table: files reviewed, issue counts broken down by severity, an overall assessment, an estimated remediation time, and a risk level. The format is intentional — it gives you the signal you need to decide whether to fix now or ship and follow up.

Below is representative output from a real review session.

🎯 Review: FeatureViewModel — ⚠️ Needs Changes

🚀 Quick Wins

Fix these first — each takes < 5 minutes:

FeatureUseCase.kt:12— Unused import → Remove lineItemListScreen.kt:15— Wildcard importio.mockk.*→ Replace with explicit importsDataRepository.kt:89— Magic number300→ Extract to named constantTIMEOUT_MS

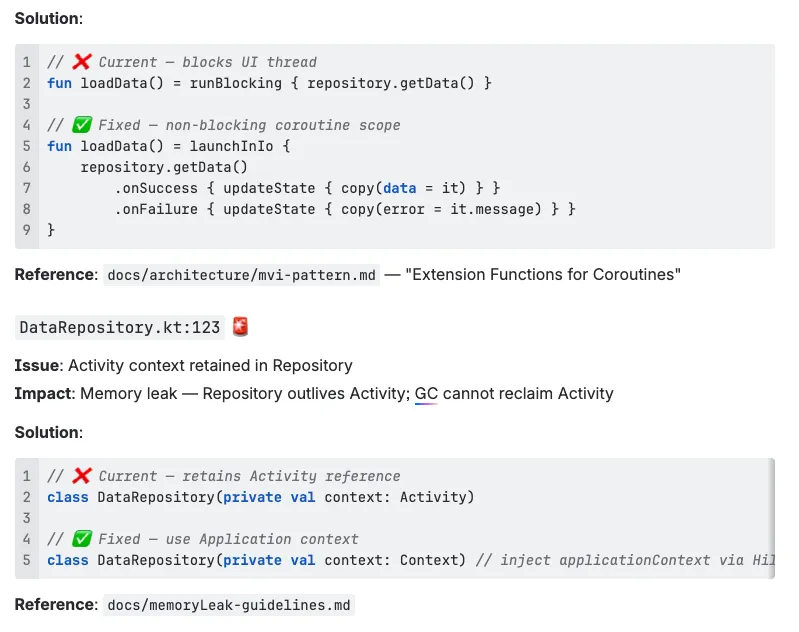

🚨 Critical Issues (Must Fix)

FeatureViewModel.kt:45 🚨

Issue: Using runBlocking in ViewModel

Impact: Blocks the UI thread on every call, causing ANRs and visible freezes

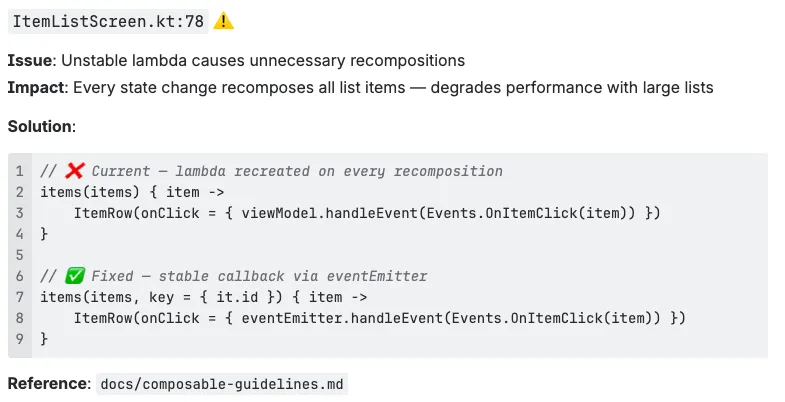

⚠️ Warnings (Should Fix)

✅ Positive Observations

- Proper MVI sealed interface hierarchy — state class is correctly marked

@Stable - Comprehensive test coverage with Given/When/Then structure throughout

- Correct use of

launchInIo/launchInMainin all other ViewModels

Where Human Review Still Wins

This is a pre-review tool. It does not replace human code review.

It will not catch everything. It has no awareness of product context, the rationale behind a decision made six months ago, or the subtle implications of a change in a shared module that multiple teams depend on. That judgment belongs to your teammates.

What it does is front-load the mechanical feedback; the pattern violations, the clear issues, the comments that would otherwise read "per our standards, this should be X." Reducing that category of feedback allows the actual review conversation to concentrate on what genuinely requires human judgment: design decisions, tradeoffs, product implications, the things that only come from knowing the codebase and the people who depend on it.

The Takeaway

Run a code review before you open the PR. It takes under a minute and will surface issues you missed.

Your reviewers will still find things. That is the purpose of code review. But when the first round of feedback is "looks good, just these two items" rather than six threads of back-and-forth, everyone's time is more effectively spent.

The code does not get worse. The review simply gets faster.

.webp)

.webp)

.webp)

.gif)

.webp)

.gif)